GeneVA Paper Accepted to WACV 2026!

I’m super excited to share that our paper “GeneVA: A Dataset of Human Annotations for Generative Text to Video Artifacts” has been accepted to WACV 2026!

This project has been an incredible learning experience. Working at NYU’s Immersive Computing Lab under Prof. Qi Sun, I had the opportunity to contribute to research addressing a critical challenge in generative AI: how do we evaluate the quality of AI-generated videos through the lens of human perception?

What We Built

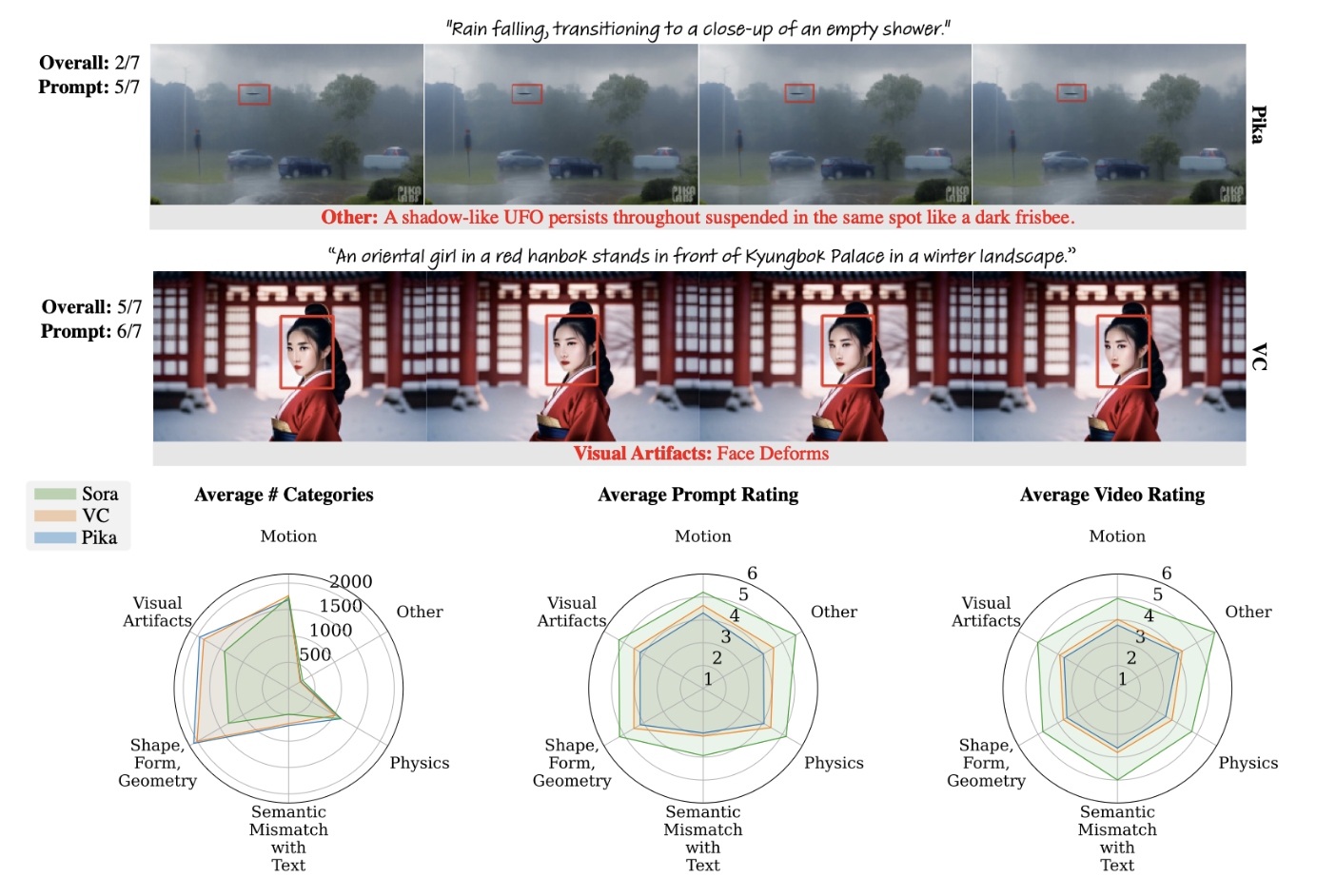

GeneVA is a dataset that captures human perception of visual quality in AI-generated videos. As text-to-video models become increasingly sophisticated, understanding how humans perceive artifacts and quality issues in generated content becomes essential for developing better models and evaluation metrics.

What’s Next

The paper will be presented at the Winter Conference on Applications of Computer Vision in 2026. I’m looking forward to sharing our work with the computer vision community and learning from the discussions at the conference.

You can read the preprint on arXiv, and check out more details on my publications page.

This work was done in collaboration with Jenna Kang, Patsorn Sangkloy, Kenneth Chen, Niall Williams, and Prof. Qi Sun at NYU’s Immersive Computing Lab.